Electronics | Free Full-Text | Accelerating Neural Network Inference on FPGA-Based Platforms—A Survey

Figure 5 from Sticker: A 0.41-62.1 TOPS/W 8Bit Neural Network Processor with Multi-Sparsity Compatible Convolution Arrays and Online Tuning Acceleration for Fully Connected Layers | Semantic Scholar

Figure 1 from A 3.43TOPS/W 48.9pJ/pixel 50.1nJ/classification 512 analog neuron sparse coding neural network with on-chip learning and classification in 40nm CMOS | Semantic Scholar

PDF) BRein Memory: A Single-Chip Binary/Ternary Reconfigurable in-Memory Deep Neural Network Accelerator Achieving 1.4 TOPS at 0.6 W

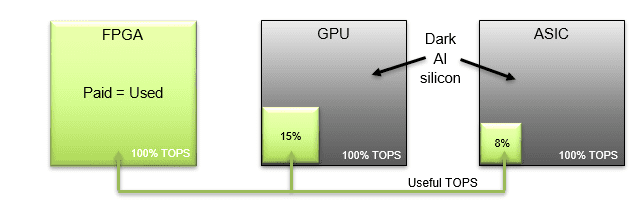

Mipsology Zebra on Xilinx FPGA Beats GPUs, ASICs for ML Inference Efficiency - Embedded Computing Design

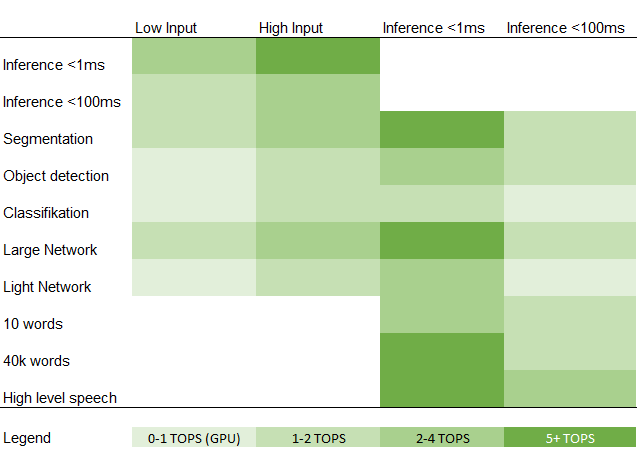

Not all TOPs are created equal. Deep Learning processor companies often… | by Forrest Iandola | Analytics Vidhya | Medium